参考内容

https://zhuanlan.zhihu.com/p/260428816

https://www.jianshu.com/p/2fd56627a3cf

https://zhuanlan.zhihu.com/p/41252484

https://www.cnblogs.com/javastack/p/14349662.html

什么是APM系统

APM(Application Performance Management)即应用性能管理系统,是对企业系统即时监控以实现对应用程序性能管理和故障管理的系统化的解决方案。应用性能管理,主要指对企业的关键业务应用进行监测、优化,提高企业应用的可靠性和质量,保证用户得到良好的服务,降低IT总拥有成本。

APM系统是可以帮助理解系统行为、用于分析性能问题的工具,以便发生故障的时候,能够快速定位和解决问题。

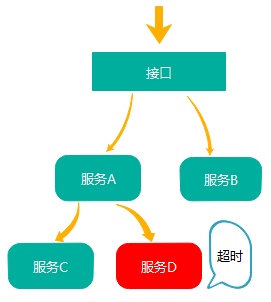

说白了就是随着微服务的的兴起,传统的单体应用拆分为不同功能的小应用,用户的一次请求会经过多个系统,不同服务之间的调用非常复杂,其中任何一个系统出错都可能影响整个请求的处理结果。为了解决这个问题,Google 推出了一个分布式链路跟踪系统 Dapper ,之后各个互联网公司都参照Dapper 的思想推出了自己的分布式链路跟踪系统,而这些系统就是分布式系统下的APM系统。

目前市面上的APM系统有很多,比如skywalking、pinpoint、zipkin等。其中

- Zipkin:由Twitter公司开源,开放源代码分布式的跟踪系统,用于收集服务的定时数据,以解决微服务架构中的延迟问题,包括:数据的收集、存储、查找和展现。

- Pinpoint:一款对Java编写的大规模分布式系统的APM工具,由韩国人开源的分布式跟踪组件。

- Skywalking:国产的优秀APM组件,是一个对JAVA分布式应用程序集群的业务运行情况进行追踪、告警和分析的系统。

什么是skywalking

Skywalking是由国内开源爱好者吴晟(原OneAPM工程师,目前在华为)开源并提交到Apache孵化器的产品,它同时吸收了Zipkin/Pinpoint/CAT的设计思路,支持非侵入式埋点。是一款基于分布式跟踪的应用程序性能监控系统。

Skywalking的具有以下几个特点:

- 多语言自动探针,Java,.NET Core和Node.JS。

- 多种监控手段,语言探针和service mesh。

- 轻量高效。不需要额外搭建大数据平台。

- 模块化架构。UI、存储、集群管理多种机制可选。

- 支持告警。

- 优秀的可视化效果。

Skywalking整体架构如下:

整体架构包含如下三个组成部分:

探针(agent)负责进行数据的收集,包含了Tracing和Metrics的数据,agent会被安装到服务所在的服务器上,以方便数据的获取。

可观测性分析平台OAP(Observability Analysis Platform),接收探针发送的数据,并在内存中使用分析引擎(Analysis Core)进行数据的整合运算,然后将数据存储到对应的存储介质上,比如Elasticsearch、MySQL数据库、H2数据库等。同时OAP还使用查询引擎(Query Core)提供HTTP查询接口。

Skywalking提供单独的UI进行数据的查看,此时UI会调用OAP提供的接口,获取对应的数据然后进行展示。

搭建环境

上文提到skywalking的后端数据存储的介质可以是Elasticsearch、MySQL数据库、H2数据库等,我这里使用Elasticsearch作为数据存储,而且为了便与扩展和收集其他应用日志,我将单独搭建Elasticsearch。

搭建Elasticsearch

前置条件:安装kubernetes,因为要部署集群,如果不部署集群的话不需要安装k8s,可以参考:http://arthurjq.com/2021/01/13/elk/

为了增加es的扩展性,按角色功能分为master节点、data数据节点、client客户端节点。其整体架构如下:

其中:

- Elasticsearch数据节点Pods被部署为一个有状态集(StatefulSet)

- Elasticsearch master节点Pods被部署为一个Deployment

- Elasticsearch客户端节点Pods是以Deployment的形式部署的,其内部服务将允许访问R/W请求的数据节点

- Kibana部署为Deployment,其服务可在Kubernetes集群外部访问

先创建estatic的命名空间(es-ns.yaml)

apiVersion: v1

kind: Namespace

metadata:

name: elastic执行kubectl apply -f es-ns.yaml

部署es master

配置清单如下(es-master.yaml):

---

apiVersion: v1

kind: ConfigMap

metadata:

namespace: elastic

name: elasticsearch-master-config

labels:

app: elasticsearch

role: master

data:

elasticsearch.yml: |-

cluster.name: ${CLUSTER_NAME}

node.name: ${NODE_NAME}

discovery.seed_hosts: ${NODE_LIST}

cluster.initial_master_nodes: ${MASTER_NODES}

network.host: 0.0.0.0

node:

master: true

data: false

ingest: false

xpack.security.enabled: true

xpack.monitoring.collection.enabled: true

---

apiVersion: v1

kind: Service

metadata:

namespace: elastic

name: elasticsearch-master

labels:

app: elasticsearch

role: master

spec:

ports:

- port: 9300

name: transport

selector:

app: elasticsearch

role: master

---

apiVersion: apps/v1

kind: Deployment

metadata:

namespace: elastic

name: elasticsearch-master

labels:

app: elasticsearch

role: master

spec:

replicas: 1

selector:

matchLabels:

app: elasticsearch

role: master

template:

metadata:

labels:

app: elasticsearch

role: master

spec:

initContainers:

- name: init-sysctl

image: busybox:1.27.2

command:

- sysctl

- -w

- vm.max_map_count=262144

securityContext:

privileged: true

containers:

- name: elasticsearch-master

image: docker.elastic.co/elasticsearch/elasticsearch:7.8.0

env:

- name: CLUSTER_NAME

value: elasticsearch

- name: NODE_NAME

value: elasticsearch-master

- name: NODE_LIST

value: elasticsearch-master,elasticsearch-data,elasticsearch-client

- name: MASTER_NODES

value: elasticsearch-master

- name: "ES_JAVA_OPTS"

value: "-Xms512m -Xmx512m"

ports:

- containerPort: 9300

name: transport

volumeMounts:

- name: config

mountPath: /usr/share/elasticsearch/config/elasticsearch.yml

readOnly: true

subPath: elasticsearch.yml

- name: storage

mountPath: /data

volumes:

- name: config

configMap:

name: elasticsearch-master-config

- name: "storage"

emptyDir:

medium: ""

---然后执行kubectl apply -f ``es-master.yaml创建配置清单,然后pod变为 running 状态即为部署成功,比如:

# kubectl get pod -n elastic

NAME READY STATUS RESTARTS AGE

elasticsearch-master-77d5d6c9db-xt5kq 1/1 Running 0 67s部署es data

配置清单如下(es-data.yaml):

---

apiVersion: v1

kind: ConfigMap

metadata:

namespace: elastic

name: elasticsearch-data-config

labels:

app: elasticsearch

role: data

data:

elasticsearch.yml: |-

cluster.name: ${CLUSTER_NAME}

node.name: ${NODE_NAME}

discovery.seed_hosts: ${NODE_LIST}

cluster.initial_master_nodes: ${MASTER_NODES}

network.host: 0.0.0.0

node:

master: false

data: true

ingest: false

xpack.security.enabled: true

xpack.monitoring.collection.enabled: true

---

apiVersion: v1

kind: Service

metadata:

namespace: elastic

name: elasticsearch-data

labels:

app: elasticsearch

role: data

spec:

ports:

- port: 9300

name: transport

selector:

app: elasticsearch

role: data

---

apiVersion: apps/v1

kind: StatefulSet

metadata:

namespace: elastic

name: elasticsearch-data

labels:

app: elasticsearch

role: data

spec:

serviceName: "elasticsearch-data"

selector:

matchLabels:

app: elasticsearch

role: data

template:

metadata:

labels:

app: elasticsearch

role: data

spec:

initContainers:

- name: init-sysctl

image: busybox:1.27.2

command:

- sysctl

- -w

- vm.max_map_count=262144

securityContext:

privileged: true

containers:

- name: elasticsearch-data

image: docker.elastic.co/elasticsearch/elasticsearch:7.8.0

env:

- name: CLUSTER_NAME

value: elasticsearch

- name: NODE_NAME

value: elasticsearch-data

- name: NODE_LIST

value: elasticsearch-master,elasticsearch-data,elasticsearch-client

- name: MASTER_NODES

value: elasticsearch-master

- name: "ES_JAVA_OPTS"

value: "-Xms1024m -Xmx1024m"

ports:

- containerPort: 9300

name: transport

volumeMounts:

- name: config

mountPath: /usr/share/elasticsearch/config/elasticsearch.yml

readOnly: true

subPath: elasticsearch.yml

- name: elasticsearch-data-persistent-storage

mountPath: /data/db

volumes:

- name: config

configMap:

name: elasticsearch-data-config

volumeClaimTemplates:

- metadata:

name: elasticsearch-data-persistent-storage

spec:

accessModes: [ "ReadWriteOnce" ]

storageClassName: managed-nfs-storage

resources:

requests:

storage: 20Gi

---执行kubectl apply -f es-data.yaml创建配置清单,其状态变为running即为部署成功。

# kubectl get pod -n elastic

NAME READY STATUS RESTARTS AGE

elasticsearch-data-0 1/1 Running 0 4s

elasticsearch-master-77d5d6c9db-gklgd 1/1 Running 0 2m35s

elasticsearch-master-77d5d6c9db-gvhcb 1/1 Running 0 2m35s

elasticsearch-master-77d5d6c9db-pflz6 1/1 Running 0 2m35s

部署es client

配置清单如下(es-client.yaml):

---

apiVersion: v1

kind: ConfigMap

metadata:

namespace: elastic

name: elasticsearch-client-config

labels:

app: elasticsearch

role: client

data:

elasticsearch.yml: |-

cluster.name: ${CLUSTER_NAME}

node.name: ${NODE_NAME}

discovery.seed_hosts: ${NODE_LIST}

cluster.initial_master_nodes: ${MASTER_NODES}

network.host: 0.0.0.0

node:

master: false

data: false

ingest: true

xpack.security.enabled: true

xpack.monitoring.collection.enabled: true

---

apiVersion: v1

kind: Service

metadata:

namespace: elastic

name: elasticsearch-client

labels:

app: elasticsearch

role: client

spec:

ports:

- port: 9200

name: client

- port: 9300

name: transport

selector:

app: elasticsearch

role: client

---

apiVersion: apps/v1

kind: Deployment

metadata:

namespace: elastic

name: elasticsearch-client

labels:

app: elasticsearch

role: client

spec:

selector:

matchLabels:

app: elasticsearch

role: client

template:

metadata:

labels:

app: elasticsearch

role: client

spec:

initContainers:

- name: init-sysctl

image: busybox:1.27.2

command:

- sysctl

- -w

- vm.max_map_count=262144

securityContext:

privileged: true

containers:

- name: elasticsearch-client

image: docker.elastic.co/elasticsearch/elasticsearch:7.8.0

env:

- name: CLUSTER_NAME

value: elasticsearch

- name: NODE_NAME

value: elasticsearch-client

- name: NODE_LIST

value: elasticsearch-master,elasticsearch-data,elasticsearch-client

- name: MASTER_NODES

value: elasticsearch-master

- name: "ES_JAVA_OPTS"

value: "-Xms256m -Xmx256m"

ports:

- containerPort: 9200

name: client

- containerPort: 9300

name: transport

volumeMounts:

- name: config

mountPath: /usr/share/elasticsearch/config/elasticsearch.yml

readOnly: true

subPath: elasticsearch.yml

- name: storage

mountPath: /data

volumes:

- name: config

configMap:

name: elasticsearch-client-config

- name: "storage"

emptyDir:

medium: ""一样执行kubectl apply -f es-client.yaml创建配置清单,其状态变为running即为部署成功。

# kubectl get pod -n elastic

NAME READY STATUS RESTARTS AGE

elasticsearch-client-f79cf4f7b-pbz9d 1/1 Running 0 5s

elasticsearch-data-0 1/1 Running 0 3m11s

elasticsearch-master-77d5d6c9db-gklgd 1/1 Running 0 5m42s

elasticsearch-master-77d5d6c9db-gvhcb 1/1 Running 0 5m42s

elasticsearch-master-77d5d6c9db-pflz6 1/1 Running 0 5m42s生成密码

我们启用了 xpack 安全模块来保护我们的集群,所以我们需要一个初始化的密码。我们可以执行如下所示的命令,在客户端节点容器内运行 bin/elasticsearch-setup-passwords 命令来生成默认的用户名和密码:

# kubectl exec $(kubectl get pods -n elastic | grep elasticsearch-client | sed -n 1p | awk '{print $1}') \

-n elastic \

-- bin/elasticsearch-setup-passwords auto -b

Changed password for user apm_system

PASSWORD apm_system = QNSdaanAQ5fvGMrjgYnM

Changed password for user kibana_system

PASSWORD kibana_system = UFPiUj0PhFMCmFKvuJuc

Changed password for user kibana

PASSWORD kibana = UFPiUj0PhFMCmFKvuJuc

Changed password for user logstash_system

PASSWORD logstash_system = Nqes3CCxYFPRLlNsuffE

Changed password for user beats_system

PASSWORD beats_system = Eyssj5NHevFjycfUsPnT

Changed password for user remote_monitoring_user

PASSWORD remote_monitoring_user = 7Po4RLQQZ94fp7F31ioR

Changed password for user elastic

PASSWORD elastic = n816QscHORFQMQWQfs4U

注意需要将 elastic 用户名和密码也添加到 Kubernetes 的 Secret 对象中:

kubectl create secret generic elasticsearch-pw-elastic \

-n elastic \

--from-literal password=n816QscHORFQMQWQfs4U验证集群状态

kubectl exec -n elastic \

$(kubectl get pods -n elastic | grep elasticsearch-client | sed -n 1p | awk '{print $1}') \

-- curl -u elastic:n816QscHORFQMQWQfs4U http://elasticsearch-client.elastic:9200/_cluster/health?pretty

{

"cluster_name" : "elasticsearch",

"status" : "green",

"timed_out" : false,

"number_of_nodes" : 3,

"number_of_data_nodes" : 1,

"active_primary_shards" : 2,

"active_shards" : 2,

"relocating_shards" : 0,

"initializing_shards" : 0,

"unassigned_shards" : 0,

"delayed_unassigned_shards" : 0,

"number_of_pending_tasks" : 0,

"number_of_in_flight_fetch" : 0,

"task_max_waiting_in_queue_millis" : 0,

"active_shards_percent_as_number" : 100.0

}上面status的状态为 green ,表示集群正常。到这里ES集群就搭建完了。为了方便操作可以再部署一个kibana服务

kibana服务

---

apiVersion: v1

kind: ConfigMap

metadata:

namespace: elastic

name: kibana-config

labels:

app: kibana

data:

kibana.yml: |-

server.host: 0.0.0.0

elasticsearch:

hosts: ${ELASTICSEARCH_HOSTS}

username: ${ELASTICSEARCH_USER}

password: ${ELASTICSEARCH_PASSWORD}

---

apiVersion: v1

kind: Service

metadata:

namespace: elastic

name: kibana

labels:

app: kibana

spec:

ports:

- port: 5601

name: webinterface

selector:

app: kibana

---

apiVersion: networking.k8s.io/v1beta1

kind: Ingress

metadata:

annotations:

prometheus.io/http-probe: 'true'

prometheus.io/scrape: 'true'

name: kibana

namespace: elastic

spec:

rules:

- host: kibana.coolops.cn

http:

paths:

- backend:

serviceName: kibana

servicePort: 5601

path: /

---

apiVersion: apps/v1

kind: Deployment

metadata:

namespace: elastic

name: kibana

labels:

app: kibana

spec:

selector:

matchLabels:

app: kibana

template:

metadata:

labels:

app: kibana

spec:

containers:

- name: kibana

image: docker.elastic.co/kibana/kibana:7.8.0

ports:

- containerPort: 5601

name: webinterface

env:

- name: ELASTICSEARCH_HOSTS

value: "http://elasticsearch-client.elastic.svc.cluster.local:9200"

- name: ELASTICSEARCH_USER

value: "elastic"

- name: ELASTICSEARCH_PASSWORD

valueFrom:

secretKeyRef:

name: elasticsearch-pw-elastic

key: password

volumeMounts:

- name: config

mountPath: /usr/share/kibana/config/kibana.yml

readOnly: true

subPath: kibana.yml

volumes:

- name: config

configMap:

name: kibana-config

---

然后执行kubectl apply -f kibana.yaml创建kibana,查看pod的状态是否为running。

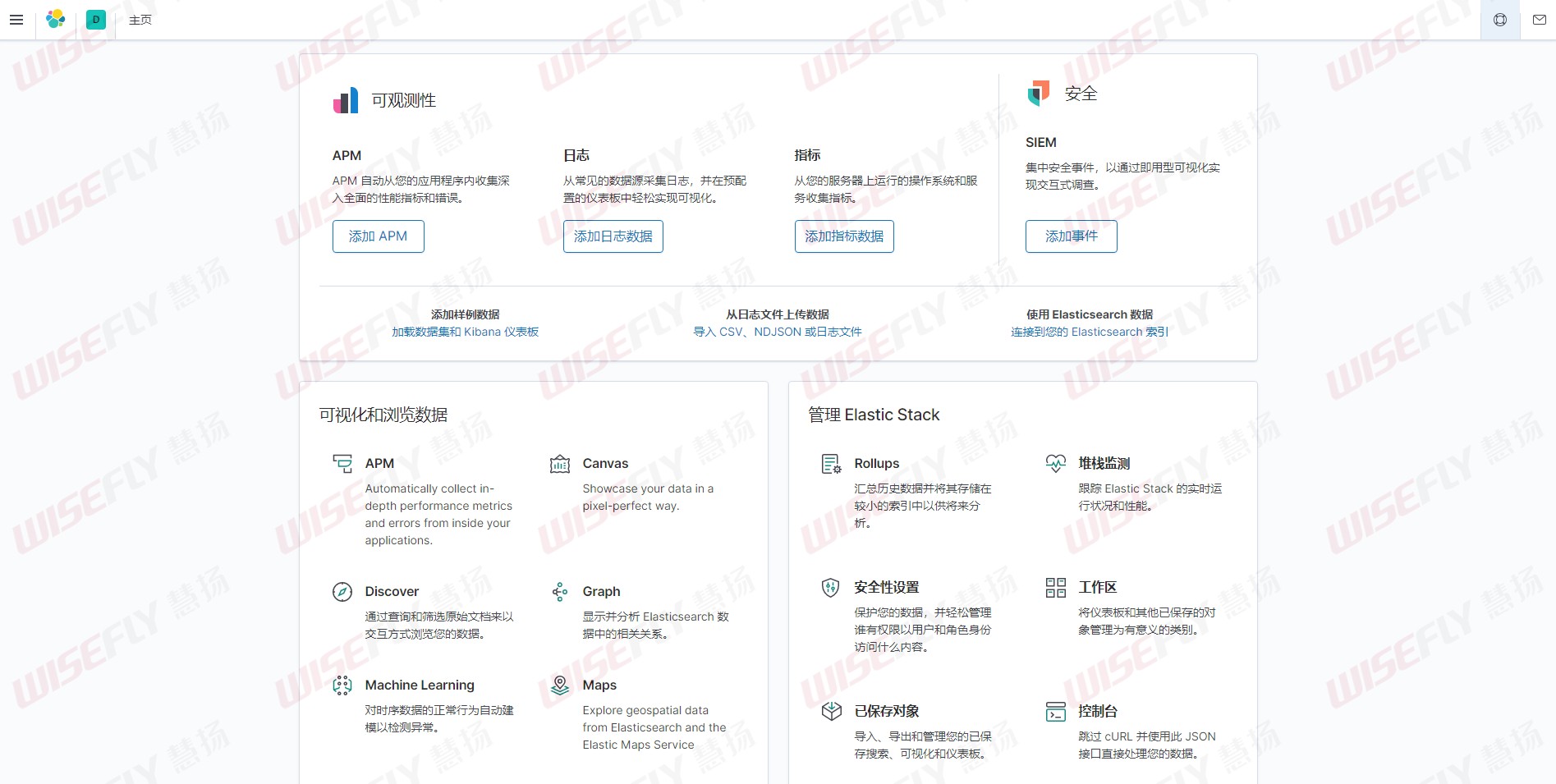

使用上面我们创建的 Secret 对象的 elastic 用户和生成的密码即可登录。

登录后界面如下:

搭建Skywalking Server

使用helm安装

安装helm,这里是使用的helm3

wget https://get.helm.sh/helm-v3.0.0-linux-amd64.tar.gz

tar zxvf helm-v3.0.0-linux-amd64.tar.gz

mv linux-amd64/helm /usr/bin/说明:helm3没有tiller这个服务端了,直接用kubeconfig进行验证通信,所以建议部署在master节点

下载skywalking的代码

mkdir /home/install/package -p

cd /home/install/package

git clone https://github.com/apache/skywalking-kubernetes.git进入chart目录进行安装

cd skywalking-kubernetes/chart

helm repo add elastic https://helm.elastic.co

helm dep up skywalking

helm install my-skywalking skywalking -n skywalking \

--set elasticsearch.enabled=false \

--set elasticsearch.config.host=elasticsearch-client.elastic.svc.cluster.local \

--set elasticsearch.config.port.http=9200 \

--set elasticsearch.config.user=elastic \

--set elasticsearch.config.password=n816QscHORFQMQWQfs4U先要创建一个skywalking的namespace: kubectl create ns skywalking

查看所有pod是否处于running

# kubectl get pod

NAME READY STATUS RESTARTS AGE

my-skywalking-es-init-x89pr 0/1 Completed 0 15h

my-skywalking-oap-694fc79d55-2dmgr 1/1 Running 0 16h

my-skywalking-oap-694fc79d55-bl5hk 1/1 Running 4 16h

my-skywalking-ui-6bccffddbd-d2xhs 1/1 Running 0 16h也可以通过以下命令来查看chart。

# helm list --all-namespaces

NAME NAMESPACE REVISION UPDATED STATUS CHART APP VERSION

my-skywalking skywalking 1 2020-09-29 14:42:10.952238898 +0800 CST deployed skywalking-3.1.0 8.1.0 如果要修改配置,则直接修改value.yaml,如下我们修改my-skywalking-ui的service为NodePort,则如下修改:

.....

ui:

name: ui

replicas: 1

image:

repository: apache/skywalking-ui

tag: 8.1.0

pullPolicy: IfNotPresent

....

service:

type: NodePort

# clusterIP: None

externalPort: 80

internalPort: 8080

....然后使用以下命名升级即可。

helm upgrade sky-server ../skywalking -n skywalking然后我们可以查看service是否变为NodePort了。

# kubectl get svc -n skywalking

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

my-skywalking-oap ClusterIP 10.109.109.131 12800/TCP,11800/TCP 88s

my-skywalking-ui NodePort 10.102.247.110 80:32563/TCP 88s 现在就可以通过UI界面查看skywalking了。

应用接入skywalking agent

现在skywalking的服务端已经安装好了,接下来就是应用接入了,所谓的应用接入就是应用在启动的时候加入skywalking agent,在容器中接入agent的方式我这里介绍两种。

- 在制作应用镜像的时候把agent所需的文件和包一起打进去

- 以sidecar的形式给应用容器接入agent

首先我们应该下载对应的agent软件包:

wget https://mirrors.tuna.tsinghua.edu.cn/apache/skywalking/8.1.0/apache-skywalking-apm-8.1.0.tar.gz

tar xf apache-skywalking-apm-8.1.0.tar.gz在制作应用镜像的时候把agent所需的文件和包一起打进去

开发类似下面的Dockerfile,然后直接build镜像即可,这种方法比较简单

FROM harbor-test.coolops.com/coolops/jdk:8u144_test

RUN mkdir -p /usr/skywalking/agent/

ADD apache-skywalking-apm-bin/agent/ /usr/skywalking/agent/注意:这个Dockerfile是咱们应用打包的基础镜像,不是应用的Dockerfile

以sidecar的形式添加agent包,首先制作一个只有agent的镜像,如下:

FROM busybox:latest

ENV LANG=C.UTF-8

RUN set -eux && mkdir -p /usr/skywalking/agent/

ADD apache-skywalking-apm-bin/agent/ /usr/skywalking/agent/

WORKDIR /然后我们像下面这样开发deployment的yaml清单。

apiVersion: apps/v1

kind: Deployment

metadata:

labels:

name: demo-sw

name: demo-sw

spec:

replicas: 1

selector:

matchLabels:

name: demo-sw

template:

metadata:

labels:

name: demo-sw

spec:

initContainers:

- image: innerpeacez/sw-agent-sidecar:latest

name: sw-agent-sidecar

imagePullPolicy: IfNotPresent

command: ['sh']

args: ['-c','mkdir -p /skywalking/agent && cp -r /usr/skywalking/agent/* /skywalking/agent']

volumeMounts:

- mountPath: /skywalking/agent

name: sw-agent

containers:

- image: harbor.coolops.cn/skywalking-java:1.7.9

name: demo

command:

- java -javaagent:/usr/skywalking/agent/skywalking-agent.jar -Dskywalking.agent.service_name=${SW_AGENT_NAME} -jar demo.jar

volumeMounts:

- mountPath: /usr/skywalking/agent

name: sw-agent

ports:

- containerPort: 80

env:

- name: SW_AGENT_COLLECTOR_BACKEND_SERVICES

value: 'my-skywalking-oap.skywalking.svc.cluster.local:11800'

- name: SW_AGENT_NAME

value: cartechfin-open-platform-skywalking

volumes:

- name: sw-agent

emptyDir: {}我们在启动应用的时候只要引入skywalking的javaagent即可,如下:

java -javaagent:/path/to/skywalking-agent/skywalking-agent.jar -Dskywalking.agent.service_name=${SW_AGENT_NAME} -jar yourApp.jar